Why I Started This Substack

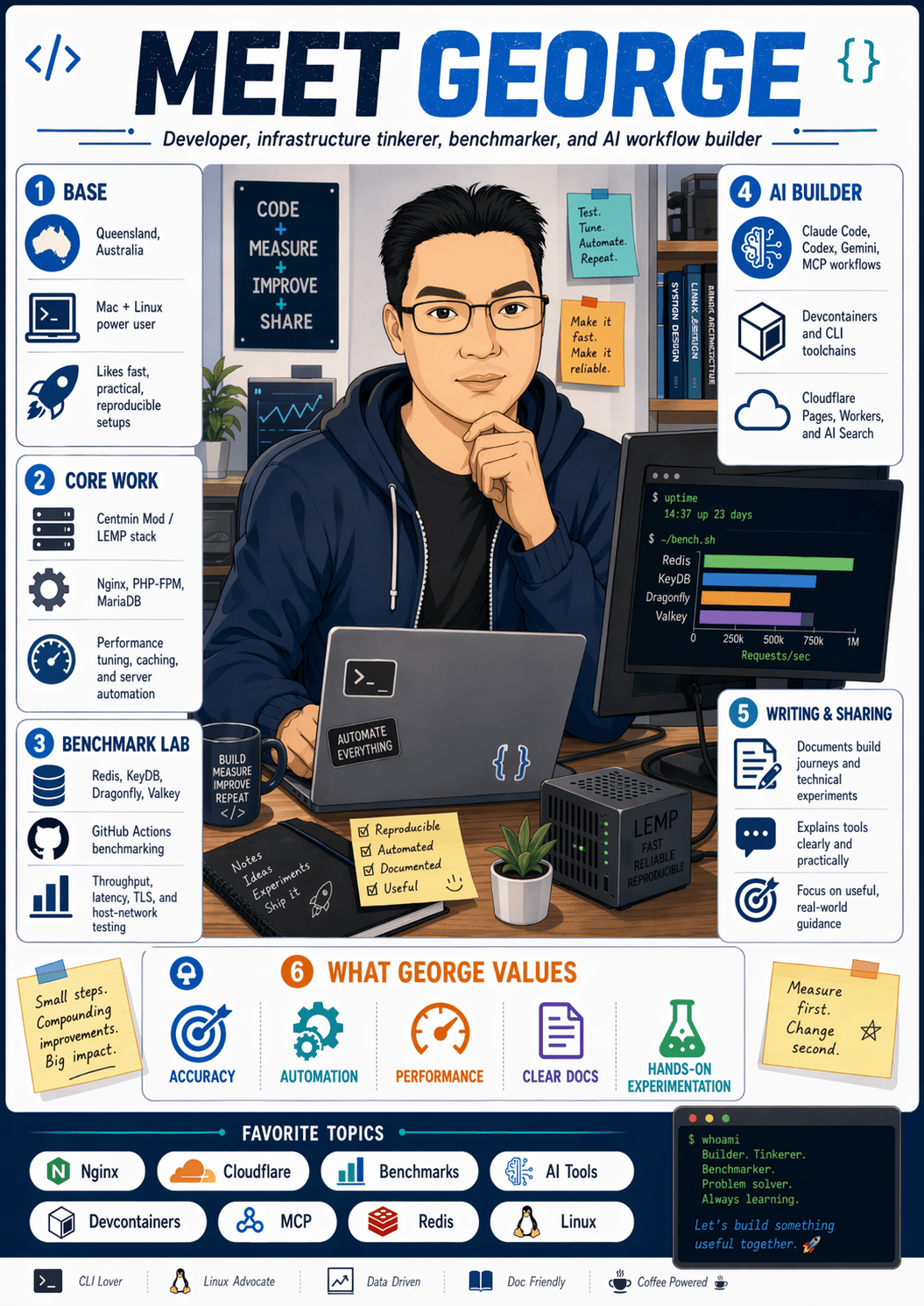

I’ve spent the last 16 years building and maintaining Centmin Mod, a LEMP stack tooling ecosystem for Linux web server environments. I have consulted for some of the largest online community forum sites using vBulletin and Xenforo for optimising their servers for scalability and performance. Squeezing out every ounce of performance from servers. I am obsessed with server performance and pagespeed optimisations and after using Cloudflare for over a decade, I officially became a Cloudflare Community MVP in 2018 till now with extensive experience using all Cloudflare free, pro, business and enterprise plans. Before that, my obsession was PC overclocking and PC hardware reviews. The thread through all of it: always looking for better ways to improve existing solutions.

That is why I started this Substack.

I want to document how I’m using AI to build useful web apps, improve developer workflows, and explore better tools and systems. Here I’ll share what I’m building, the workflows and tools I use, and an honest look at what works and what doesn’t.

I want to show more than finished results. I want to share the process too.

What I tried. What changed. What worked. What didn’t.

Less hype, more implementation.

I started my AI journey like most folks with the launch of OpenAI ChatGPT website - almost immediate jumping on ChatGPT Plus $20/month paid subscription when it came out. Then it was Claude Pro $20/month and Gemini AI Pro $20/month subscriptions.

Most of it was for coding first. I have a lot of ideas and devops tooling that was on the back burner and AI chat helped out. AI helped fill in knowledge gaps I had and gave me deeper insights as I asked more questions. I would always get each respectively LLM model response to evaluate each others’ responses to improve the final product. Unfortunately, running into token context limits back then for both input tokens and output tokens. LLM models around then were below 128K tokens with 4-8K max output tokens. This multi-AI consultation process would eventually lead me to creating my Claude Code /consult-codex and /consult-zai Skills.

I was still using my trusty Sublime Text editor I tried Visual Studio Code before, but never really took to it. But each time I tried Visual Studio Code, I would eventually return back to the Sublime Text editor as I was used to key mappings and shortcuts.

It would probably was several months until January-February 2024, that I decide to try Cline in Visual Studio Code. I paired Cline with OpenRouters API burning up tokens. If I recall correctly, the model most use on OpenRouters at that time, was the Google Gemini models. This was a game changer, as I no longer had to be stuck with the context limits within the web chat AI interface and that there was more context information that could provide the AI models.

From March 2024 to June 2025, I was using the Claude Pro $20/month plan. Up until this point, I was only using the Claude Plan within the web chat interface. Claude Code had been launched in February 2025, but I still haven’t used it yet.

One of my earlier AI coded projects using Cline and Gemini 2.0 models was my OpenRouter API python script, or-cli.py back in February 2025 when I discovered the wonderful OpenRouter AI API provided a lot of free LLM model usage. I took advantage of that Google Gemini LLM model usage a lot and dabbled for the first time into Cloudflare AI Gateways.

That changed in March 2025 when I decided to use Claude Code within Visual Studio Code. This would be the game-changing moment, as I decided to focus and go all in on learning Claude Code. My Claude Code usage grew, and by July 2025 I had upgraded to the Claude max $100/month plan and I have been on this plan ever since (~10 months).

It was around this time I created my Claude Code starter template GitHub repository to document and share my own Claude Code journey with others which includes custom CLAUDE.md memory bank system modeled after Cline’s memory bank system which I had gotten use to. Then I added my Claude Code skills, agents/commands and knowledge to the repo which has grown to nearly 2.2K GitHub stars and consistently averages 1,500 to 3,000 visitors per week!

I had gotten used to Visual Studio Code and no longer use the Sublime Text editor. This meant that I also got to play with other Visual Studio Code AI extensions, including Kilo Code, OpenAI Codex CLI, Google Gemini CLI, and OpenCode. Kilo Code clearly is one of my top favourites behind Claude Code and Codex CLI.

Also as an open source maintainer of my Centmin Mod LEMP stack that is GPLv3 licensed, I also got free access to GitHub Copilot Pro plan, which normally costs $10/month.

So right now my AI is spend includes:

Claude AI Max US$100/month + 10% Australian GST

OpenAI ChatGPT Plus US$20/month + 10% Australian GST

Google Gemini AI Pro US$20 month + 10% Australian GST

GitHub CoPilot Pro US$10/month + 10% Australian GST currently free

ZAI GLM Coding plan US$129/year (64% discount) + 10% Australian GST

t3.chat US$8/month + 10% Australian GST

Currently, my AI coding setup just migrated from multiple iTerm2/tmux terminals + Visual Studio Code to using warp.dev just as a multiple window/tab terminal without the AI bells and whistles. I also have Claude and multi-AI CLI VSC dev containers using Debian Docker images that I documented at https://claude-devcontainers.centminmod.com.

Just a few days ago revisited voice dictation as well, currently using Wispr Flow. Folks can get a free month of Wispr Flow Pro using my invitation link.

I hope this gives readers, a bit of background of my AI journey to date.

The next post is a concrete example: I tested 11 AI models on timezone scheduling, none of them got it right, so I built something that did.

If you’re interested in practical AI building for web apps, developer workflows, and infrastructure, subscribe for future posts. You can also follow my shorter updates on Threads (@george_sl_liu) and Bluesky (@georgesl.bsky.social) or subscribe and follow along.